Script: Inactive Parallel QC Holding Parallel Processes

Working on a data warehouse system can be quite challenging, as I mentioned in the post from yesterday. One of the things we need to take care of is the amount of parallel processes that are in used at all times. Yesterday I wrote about how to locate downgraded sessions. Today we will look at another aspect – who “steals” parallel processes and what can we do to solve it.

One of the biggest thieves of parallel processes in a data warehouse environment are actually developers and DBAs. Sometime, while developing or just handling the system, DBAs run queries in GUI tools (TOAD, PL/SQL Developer, SQL Developer). That for itself isn’t a big deal, but those tools have a common feature. Instead of returning the entire data set, it sometimes return only a few records (the number depends on the tool). In that case, the cursor is being kept open and is waiting for then next fetch or until different query is ran. While waiting, the parallel processes are being reserved for that query, but the query coordinator is marked as Idle.

If there are couple of DBAs running those queries from multiple windows and they “forget” their queries (because sometime they run for very long) – that becomes a problem: “real” application queries are being downgraded causing the mess I described yesterday.

To solve that, we created the following query. We actually took that query and wrapped it with shell script to automatically kill the session, but let’s keep it to the basic query we used.

Script: Finding Session With Downgraded Parallel Execution

I was working with the data warehouse team at a customer site and at some point we realized that some parallel executions are not getting enough resources (downgraded).

Not getting enough parallel processes in such a complex environment is really bad. That means that since everybody is hogging the CPU, some sessions will not be able to complete inside the night ETL time frame. If that happens – some ETLs will go on into the day providing wrong data to the customer in even worse performance for the morning shift. Another aspect is the memory usage for large sorts or hash joins. Using less processes will mean some of the data will not reside in the memory and will need to be allocated in the temporary tablespace.

The customer asked how can he find those downgraded (meaning, not getting enough parallel processes) at real time. This is the script we created for that.

Manually Generating AWR Report

One of my customers asked me to check performance on his production database server but could not allow any access to the server itself. He asked if I could generate the AWR reports from his client machine and since it’s not really trivial (or hard) I created this script.

ilOUG Oracle Technologies Meetup Group

I have a confession: I love Oracle User Groups. Here. I said it.

I think that the idea of a community gathered around common professional interests and expertise are great. It’s an opportunity to meet you colleague and learn a thing or two you don’t get to work with on a daily basis from your peers. For junior DBAs it’s even more important. They have the opportunity to look into their future and see where they want to be when they get more experienced. In my opinion, networking is SUPER important!

tl;dr: ilOUG is reinstating the quarterly SIG meetings, I’m one of the organizers, join us if you’re in Israel!

https://www.meetup.com/ilOUG-Oracle-Technologies-Meetup

For the longer version, read bellow… 🙂

Script: Finding the Top N Queries for a User (AWR)

In some conditions, I need to find the top N queries for a specific user in the database.

Assuming my customer is running Enterprise Edition and have tuning pack licenses, it is easy enough to pull the data off the Automatic Workload Repository (AWR).

For some reason, a lot of DBAs are not aware that the AWR report is just a report – and you can query the base table yourself to extract more information if you need it.

This is a short script I sometime find very useful – finding the top N queries for a specific user.

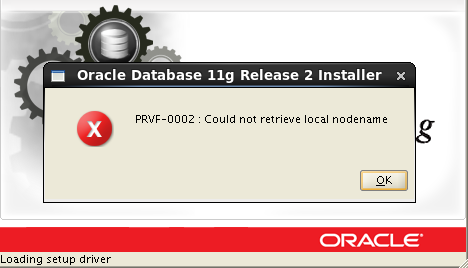

Fixing PRVF-0002 : Could not retrieve local nodename

Here is a common question I get from junior DBAs (and operating system who tries to help by installing the Oracle Home themselves). The question sometimes sounds like this: “After we installed the new database server and changed its hostname, we try to install the Oracle Home using the runInstaller but hitting the following error: PRVF-0002: Could not retrieve local nodename. How do we resolve it?”.

In terms of looks, the error message looks something like this (this is from Oracle 11g, but it also happens in other versions as well):

Indeed, a problem – but what can it be?

Read more →